California has 576 million drivers, and other AI lies

If Code Interpreter says it's true, who am I to judge?

In my last post, I said that it was inevitable that some hapless, non-quantitative marketing executive would unthinkingly rely on output provided by OpenAI’s Code Interpreter plugin for ChatGPT. And, well, here we are. My assumed hapless non-quantitative marketing executive found a dataset1 of licensed drivers in the United States’ 50 states and DC.

I uploaded the dataset to ChatGPT and provided the following prompt: Review the data in this file, provide a descriptive analysis of it, and then suggest some analyses that can be run on it.

Rather than bore you with the details of what Code Interpreter found in its initial review of the data, I’ll just tell you how the data are structured, as this is pertinent to the point of this post. The columns are:

Year

Gender

Cohort (age group)

State

Drivers

So, we have data, arranged by year, cohort, and state (including Washington, DC), of licensed drivers. One analysis which Code Interpter suggested was a geospatial analysis: a graphical analysis of how the data are distributed across geography. In other words, it proposed creating a graphical representation of which states have the most licensed drivers. As this kind of analysis is merely a linear function of the state’s overall population, and I already know the population distribution of the United States, this didn’t especially interest me.

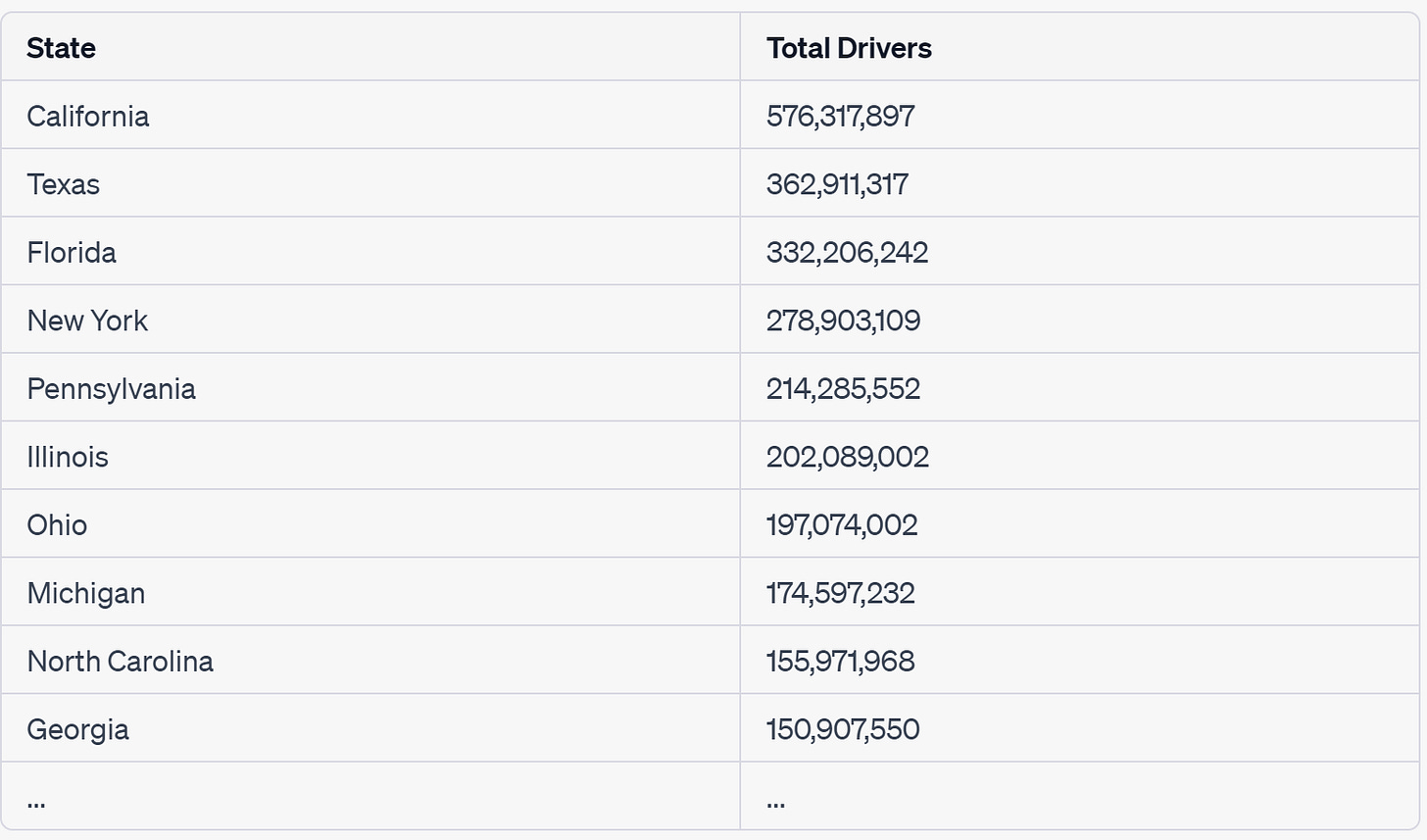

However, I was curious to see the geospatial data in a table format. I wanted to see a table of the number of licensed drivers in each state. So here’s my prompt: Do a geospatial analysis. However, don’t visualize this on a map. Rather, compile a table.

Here’s the code that Code Interpreter generated for this task:

# Let's first group the data by state and then sum up the number of drivers for each state

state_data = data.groupby('State')['Drivers'].sum().reset_index().sort_values(by='Drivers', ascending=False)

# Display the table

state_data

Then it displayed the output:

Surely you can see the problem! California can’t have 576 million licensed drivers if the population of the United States is only 330 million or so. But it requires a human to know this. A human knows that 330 million is less than 576 million, and that if the United States’ population as a whole is only 330 million, a sub-unit of the United States can’t have a population greater than that number. 330,000,000 < 576,000,000 ought to be evident to a ten-year-old. But it not evident, apparently, to our (trusty?) AI.

But it gets even better. ChatGPT confidently says, of its Code Interpreter-mediated output: “This table allows us to see which states have the highest total number of drivers. As expected, states with larger populations like California, Texas, and Florida, have the highest numbers of drivers.” While that statement is obviously true, and the data provided support the claim, the data are still wrong!

Curious to figure out what it was “thinking”, I responded Explain how you calculate the number of drivers in California. What are the specific pieces of data which are summed?

Here’s its response:

The total number of drivers in California is calculated by summing the ‘Drivers’ value for all records where the ‘State’ is California.

(Emphasis is mine.)

Hopefully you can see the problem here. Code Interpreter doesn’t understand this dataset as well as it seems to. It doesn’t understand that to calculate the number of licensed drivers in a particular state, you need to choose one particular year for your calculation. A cumulative sum of licensed drivers in a state over the time period of data collected makes zero sense. And yet, that is what Code Interpreter dutifully did.

My response: Right, the cumulative total is not the correct analysis here. For a geospatial analysis, you need to pick one year, and provide a table of state-level numbers for that particular year. For example, provide a table which shows number of drivers in each state for the year 2018.

Here’s the code it generated in response to this instruction:

# Filter the data for the year 2018

data_2018 = data[data['Year'] == 2018]

# Group by state and sum up the number of drivers for each state

state_data_2018 = data_2018.groupby('State')['Drivers'].sum().reset_index().sort_values(by='Drivers', ascending=False)

# Display the table

state_data_2018And here’s the table it outputted:

This seems much more accurate.

The conclusion we ought to draw from this exercise in analytical error is exactly what I proposed in my previous post: the person requesting the analysis has to understand the underlying data, its structure, and its limitations. Relying solely on ChatGPT’s analytical judgment is a fraught exercise, as we saw with the lawyer who relied on it for precedential cases favorable to his client.

ChatGPT and its Code Interpreter plugin are extraordinaily powerful tools. But because they are so powerful, they have to be wielded intelligently by the people who use them. Blindly accepting its output is perilous.

The dataset, and its accompanying license, are available here. It ought to go without saying that all of my analysis of Code Interpreter’s analytical error relies on the assumption that the data in the dataset is itself accurate. As the description provided at the link indicates that these data were collected from data.gov, I think it’s fairly reasonable to assume that the dataset is a more or less accurate compilation of licensed drivers in each of the states, plus DC, for the time periods provided.

We are definitely at the "try to break the model" phase. This is a super important phase with any emerging tech I want to actually use -- I have to make sure I fully understand what it CAN'T do, then figure out what I can do with it.

Of course, the things we can't do tend to get lower over time, too!

Great example, and important. Both a reasonable wrong request wording, and a far better request wording. Total # of CA drivers ( over many years) at 576 mln , is less good than total # of CA drivers in 2018. 27 mln is the (more?) right answer. More folks should guess first. I would have guessed 3/5s - 3/4s or up to 4/5s of CA pop of 36 mln.

Smart folk willing to estimate first, then check more carefully if the AI answer is outside the range, seem more likely to avoid wildly wrong answers.